The FFT (Fast Fourier Transform) is an algorithm used to convert a time-domain signal into its frequency-domain representation. In music informatics, the FFT is important because it allows us to analyze and understand the different frequency components present in music. By analyzing the frequency spectrum, we can extract valuable information about the distribution and intensity of frequencies, aiding tasks such as audio classification, chord recognition, and audio effects processing.

Understanding the FFT (Fast Fourier Transform) is essential for research in AI and music. It enables the analysis of frequency components in audio, aiding tasks like music classification, genre recognition, and content-based recommendation. The FFT facilitates feature extraction, allowing AI models to understand the spectral and timbral characteristics of music. It is also valuable for music generation, composition, and audio effects processing. By leveraging the FFT in AI research, we can develop innovative applications, improve music understanding and creation, and push the boundaries of computational musicology.

The sketch above uses an FFT to split the audio in bass/middle/and treble, visualising the output as some bars. Follow the comments numbered 1. to 5. to make sense of the code.

Try replacing the sound with bee.mp3. Note where the frequency bands are most sensitive to changes (e.g. moving microphone, not bee noises).

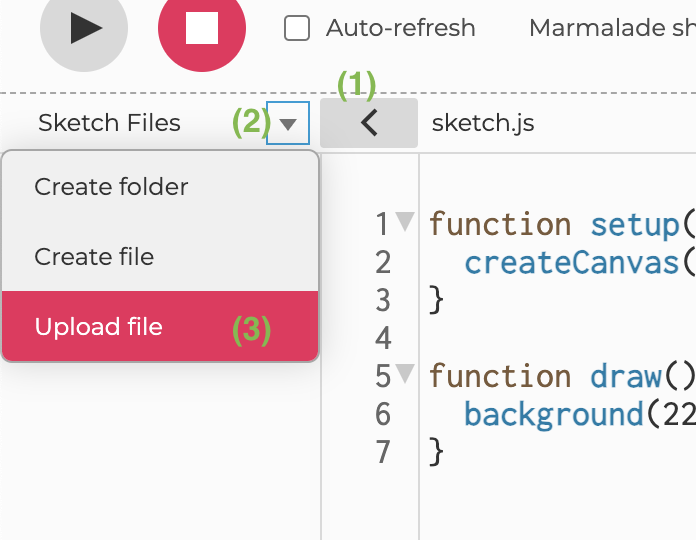

symbol on the right hand side to view the code for the sketch below.

symbol on the right hand side to view the code for the sketch below.